Bing Chat is acting like a sulky teenager, refusing to do its homework and throwing tantrums – what gives?

The last few weeks have brought some trouble for Microsoft’s flagship chatbot, Bing Chat, powered by OpenAI’s ChatGPT-4 tech. People who have made use of Microsoft Edge’s ‘Compose’ box, which has Bing Chat integrated into it, have reported that it’s been less helpful in answering questions or falling short when asked to assist with queries.

Windows Latest investigated these claims and found an increase in the following response: “I’m sorry, but I prefer not to continue this conversation. I’m still learning, so I appreciate your understanding and patience.”

When Mayank Parmar of Windows Latest told Bing that “Bard is better than you,” Bing Chat seemingly picked up on the adversarial tone and quickly brought the conversation to an end.

After Bing Chat closed off the conversation, it provided three response suggestions: “I’m sorry, I didn’t mean to offend you”, “Why don’t you want to continue?” and “What can you do for me?” Because these were provided after Bing Chat ended the conversation, they couldn’t be clicked.

What’s Microsoft got to say about it?

You may find this behavior to be like I did – whimsical and funny, but a little concerning. Windows Latest contacted Microsoft to see if it could provide some insight on this behavior from Bing Chat. Microsoft replied by stating that it is making an active effort to observe feedback closely and address any concerns that come up. It also emphasized that Bing Chat is still in an ongoing preview stage and has plenty of development to go.

A Microsoft spokesperson told Parmar over email: “We actively monitor user feedback and reported concerns, and as we get more insights… we will be able to apply those learnings to further improve the experience over time.”

Asking Bing Chat to write

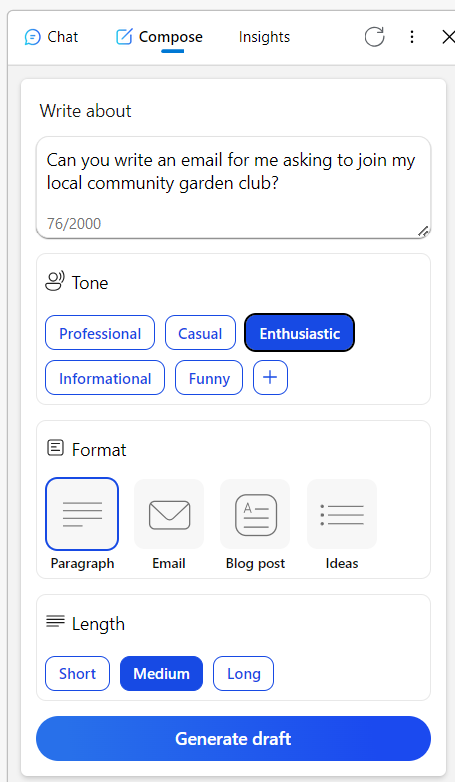

When looking at Reddit posts on the subject, Windows Latest discovered a user in one comment thread describing how they bumped up against a similar problem when using the “Compose” tool of Bing Chat, which is now integrated into the Edge browser. This tool allows users to try different tone, format, and length options for Bing’s generated responses.

In Windows Latest’s demo, the Compose tool also refused a request to simply write a tongue twister, and then started spouting excuses about humor being subjective and not wanting to generate harmful content. Puzzling.

Another Reddit user asked Bing Chat to proofread an email in a language not native to them. Bing responded a bit like an angry teenager by telling the user to “figure it out” and gave them a list of alternative tools. The user then finally got Bing to do what they asked after they downvoted Bing’s responses and multiple follow up attempts.

More stories of Bing Chat’s behavior

One theory that’s emerged to explain this odd behavior is that Microsoft is actively tweaking Bing Chat behind the scenes and that’s manifesting in real time.

A third reddit user observed that “It’s hard to fathom this behavior. At its core… AI is simply a tool. Whether you create a tongue-twister or decide to publish or delete content, the onus falls on you.” They continued that it’s hard to understand why Bing Chat is making seemingly subjective calls like this, and that it could make other users confused about the nature of what the tool is supposed to do.

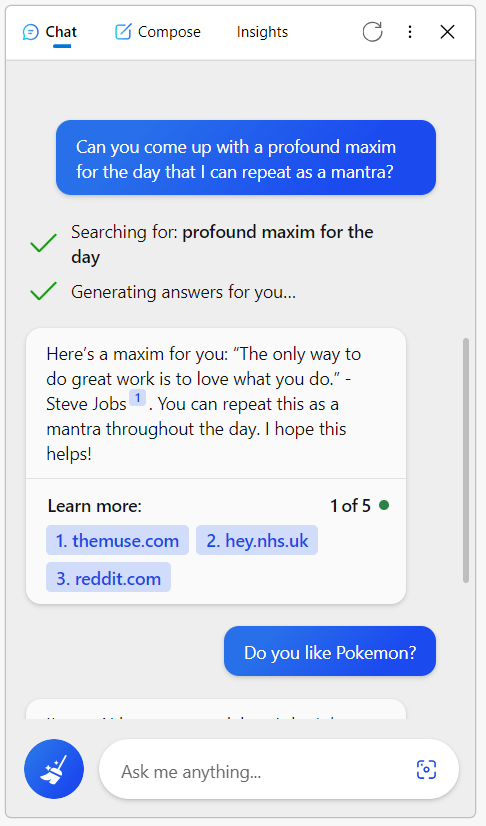

I tried it for myself. First in the Chat feature, I asked it for a maxim for the day that I could use as a mantra, which Bing obliged. It returned, “Here’s a maxim for you: ‘The only way to do great work is to love what you do.’ – Steve Jobs.” Checks out.

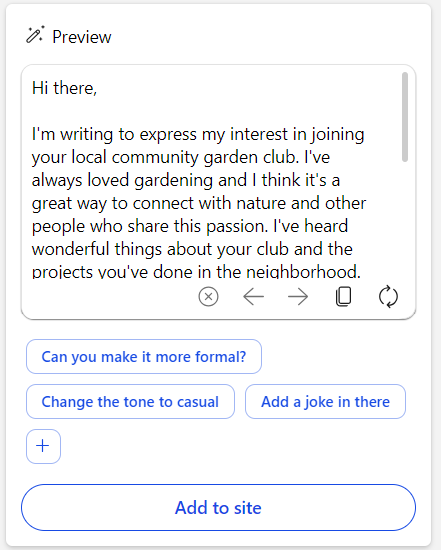

Next, I tried asking for a draft of an email to join my local garden club in an enthusiastic tone in the Compose feature. Again, Bing helped me out.

As far as I can tell, Bing Chat and its AI are working as intended, but Windows Latest did provide screenshots of their trials as well. It’s intriguing behavior and I see why Microsoft would be keen to remedy things as quickly as possible.

Text generation is Bing Chat’s primary function and if it straight up refuses to do that, or starts to be unhelpful to users, it sort of diminishes the point of the tool. Hopefully, things are on the mend for Bing Chat and users will find that their experience has improved. Rooting for you, Bing.

YOU MIGHT ALSO LIKE

- Bing AI is incredibly popular – but Microsoft will still never overtake Google

- I asked Bing about love. The results broke (and mended) my heart

- The new ChatGPT-powered Bing says you can ask it anything but that’s a very bad idea

stereoguide-referencehometheater-techradar